Many business websites have key problems, such as broken internal links and duplicate title tags, that hamper search engines from being able to crawl and index them effectively, according to recent research from SEMrush.

The report was based on an analysis of the websites of 250,000 businesses operating in a wide range of verticals, including health, travel, and science. The researchers used SEMrush's Site Audit tool to scan each website for more than 130 common search engine optimization issues. The results were then sorted into three levels of severity: errors (the most critical issues), warnings, and notices (the full methodology and findings are here).

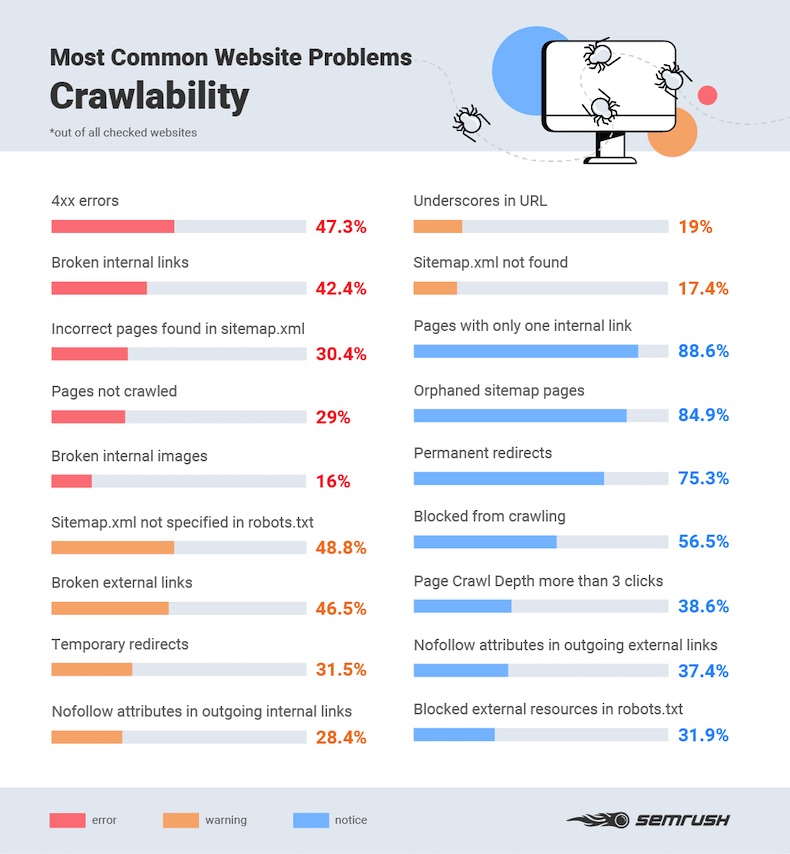

Crawlability problems

The most common major problems impacting crawlability (the visibility of a site to search engines) are 4xx errors, such as 404s (an issue on 47% of sites examined), broken internal links (42%), and incorrect pages in the sitemap (30%).

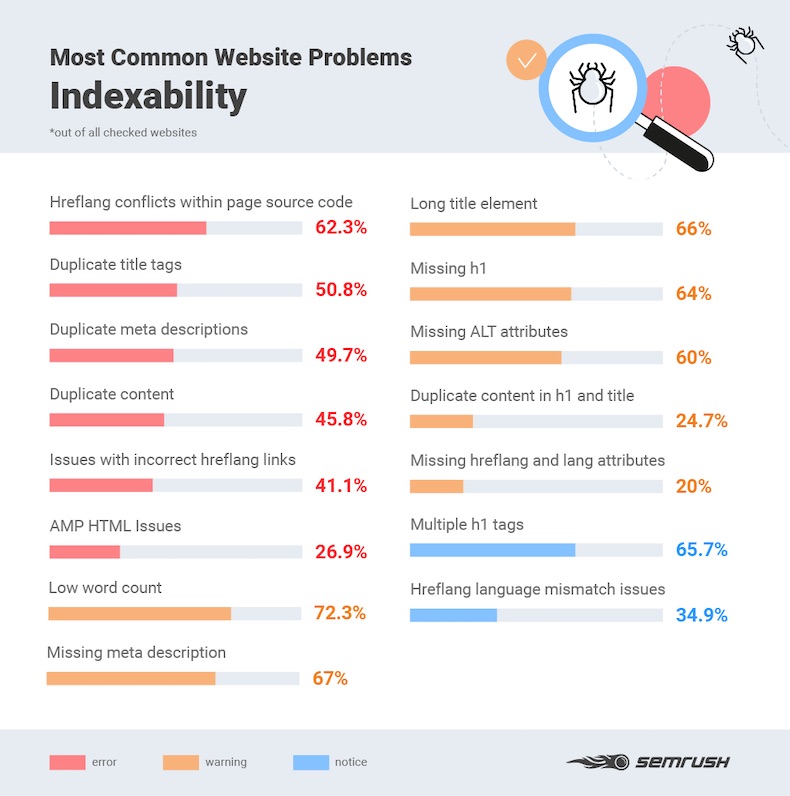

Indexability problems

The most common major problems impacting indexability (how well a search crawler can index a site) are hreflang conflicts (when the specified language for multilingual websites conflicts with the source code; an issue on 62% of sites examined), duplicate title tags (51%), and duplicate meta-descriptions (50%).

About the research: The report was based on an analysis of the websites of 250,000 businesses operating in a wide-range of verticals, including health, travel, and science.